Building Autonomous Racing Intelligence.

A fully self-built autonomous racing car featuring stereo visual SLAM, multi-sensor EKF fusion (wheel encoder, IMU, steering input), real-time path planning, and Ackermann steering control — running entirely on a Raspberry Pi 5 (8 GB).

The Platform

The Robot

A custom-built autonomous racing platform designed from the ground up.

Hardware Components

System Architecture

How the Robot Thinks and Moves

A tightly integrated stack — from raw sensor data to wheel actuation — running entirely on commodity hardware.

Perception

- ELP Global Shutter Stereo Camera

- STM LSM6DSOX IMU

- Wheel Encoder

- Steering Angle Input

- SGBM Stereo Depth

- ORB-SLAM3 / RTAB-Map

- Kalibr Calibration

Planning

- RRT / A* Global Planner

- Gap Following

- Pure Pursuit

- Racing Line Optimization

Control

- Arduino Nano RP2040 Connect

- Ackermann Steering (Servo)

- Brushless Motor + ESC

Autonomy in Motion

Real-time SLAM, path planning, and motor control — all running on commodity hardware.

Mapping and Localization

Perception Stack

Two independent SLAM systems run on the robot, each suited to different operating conditions and levels of sensor fusion.

Camera-IMU Calibration

Before any SLAM algorithm runs, the stereo camera and IMU must be spatially and temporally calibrated. Kalibr estimates the extrinsic transform between sensors and aligns their timestamps — a prerequisite for accurate visual-inertial odometry.

Camera-IMU Calibration (Kalibr)

Spatial and temporal calibration of the ELP stereo camera with the STM LSM6DSOX IMU using Kalibr. Required for accurate visual-inertial odometry.

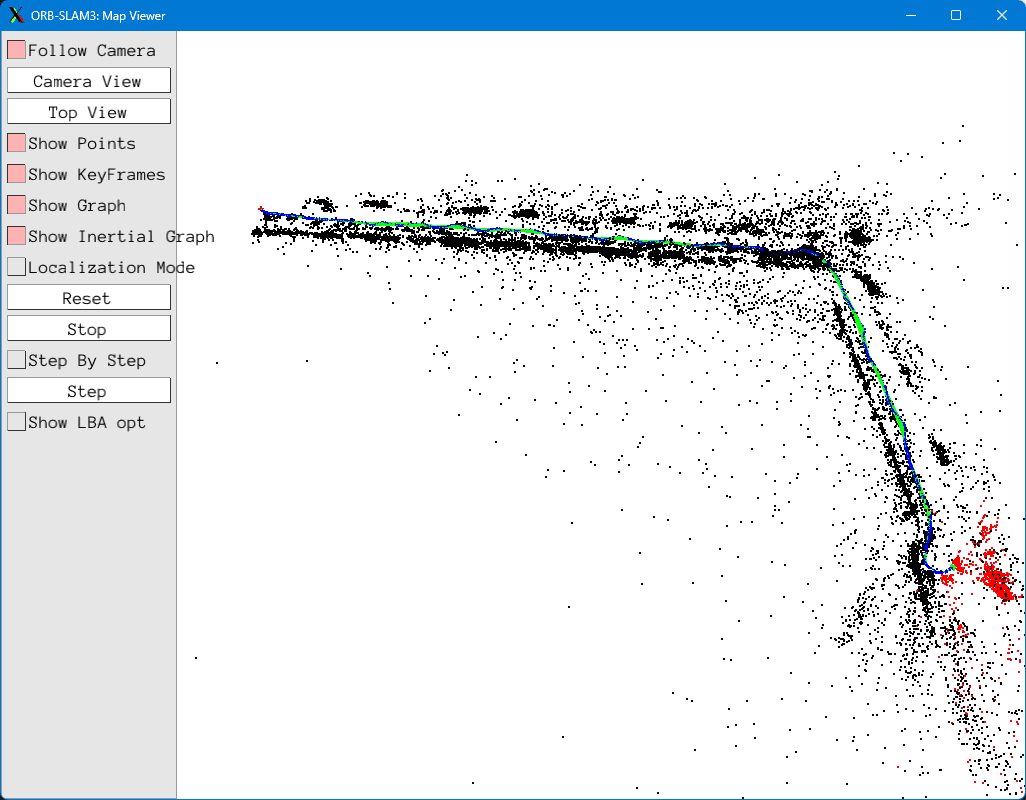

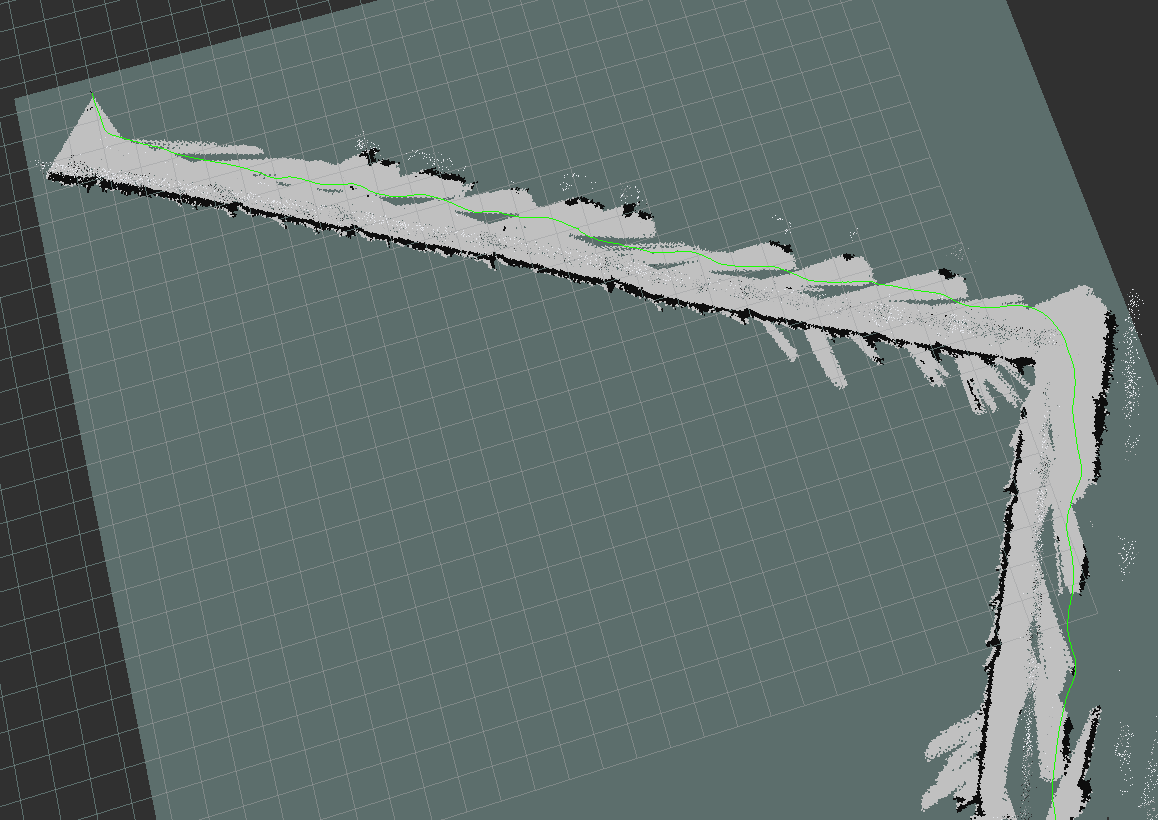

ORB-SLAM3

A stereo visual SLAM system that relies purely on the stereo camera — no IMU involved. ORB feature extraction produces a sparse 3D map with real-time loop closure and relocalization. The stereo camera is calibrated with Kalibr to ensure accurate stereo geometry for feature triangulation.

ORB-SLAM3 Corridor Mapping

Real-time stereo visual SLAM in an indoor corridor. Sparse point cloud with loop closure and relocalization.

ORB-SLAM3 Map Output

Zoomed-out view of the sparse 3D map built by ORB-SLAM3, showing the reconstructed point cloud and navigable corridors.

RTAB-Map

Unlike ORB-SLAM3, RTAB-Map fuses a richer set of proprioceptive sensors before building the map. Wheel encoder odometry, IMU measurements, and the commanded steering angle are fed into an Extended Kalman Filter (EKF) node, which produces a fused odometry estimate. This is then combined with the camera's visual odometry inside RTAB-Map, giving the system a far more robust pose prior — especially in low-texture areas where pure visual methods drift.

Sensor Fusion Pipeline

RTAB-Map Dense Reconstruction

Dense RGB-D SLAM generating a rich occupancy grid for navigation. Appearance-based loop closure handles revisited environments.

RTAB-Map Output Visualization

2D occupancy grid output from RTAB-Map — the primary map representation consumed by the path planner.

Stereo Depth Estimation

The stereo camera pair feeds a Semi-Global Block Matching (SGBM) pipeline running at 15 FPS entirely on the Raspberry Pi 5 (8 GB) CPU. The resulting dense depth map is published as a ROS 2 topic, leaving enough CPU headroom for SLAM, planning, and other algorithms to consume it simultaneously — no dedicated GPU needed.

SGBM Stereo Depth

Semi-Global Block Matching running at 15 FPS on the Raspberry Pi 5 (8 GB) CPU, leaving sufficient headroom for other algorithms to consume the depth map concurrently — no GPU required.

Path Planning

Where to Go and How to Get There

A layered planning stack — global route computation paired with reactive local obstacle avoidance — for safe, efficient trajectories.

Probabilistic sampling-based planner that efficiently explores high-dimensional configuration spaces to find feasible paths around obstacles.

Optimal grid-based path search using an admissible heuristic. Used for global route planning on the occupancy map generated by RTAB-Map.

Reactive local planner that identifies the widest obstacle-free gap in depth data and steers toward it — ideal for tight corridors at speed.

Geometric path tracker that computes the steering angle required to intercept a lookahead point on the planned trajectory.

Trajectory Generation

Raw waypoints are smoothed into kinematically feasible trajectories respecting the Ackermann geometry. Velocity profiles are computed to maximize speed within safe lateral acceleration limits.

Racing Line Optimization

For time-trial scenarios, an optimization pass computes the minimum-curvature racing line through waypoints — enabling the robot to carry maximum speed through corners.

Low-Level Execution

Control Architecture

Translating planned trajectories into precise wheel commands at millisecond timescales.

Ackermann Steering Model

The robot uses rear-wheel-drive Ackermann geometry, ensuring each wheel follows its own arc during a turn. This eliminates tire scrub and maintains stability at speed.

PID Controller

Proportional-Integral-Derivative control loops regulate both longitudinal speed and lateral steering angle, continuously minimizing tracking error against the planned trajectory.

Model Predictive Control

MPC solves a finite-horizon optimization at each timestep, predicting future states and computing control inputs that minimize a cost function over the prediction window.

Arduino RP2040 Low-Level Interface

The Arduino Nano RP2040 Connect translates high-level velocity and steering commands from ROS 2 into PWM signals for the brushless motor ESC and steering servo, providing deterministic real-time actuation.

Control Loop

Odometry and state feedback loop back to the controller

Tools and Technologies

Built With Precision

C++ — Dominant Codebase Language

C++ is the primary language across the robot's software stack. Its zero-overhead abstractions, deterministic memory management, and direct hardware access make it the right choice for real-time ROS 2 nodes where every microsecond of latency matters.

FreeRTOS on Arduino — Lowest Jitter, Highest Determinism

The Arduino Nano RP2040 Connect runs FreeRTOS to deterministically sample the IMU, read servo position, and capture motor PWM feedback — then stream that data to the Raspberry Pi at a high, consistent rate. Preemptive task scheduling and priority-based execution eliminate the jitter inherent in bare loop() polling, ensuring sensor readings arrive at the Pi with minimal and predictable latency.

Platform Comparison

How It Compares

A detailed comparison of sensor capability, algorithm coverage, real-time engineering, and industry readiness across the leading small-scale autonomous racing platforms.

| Category | F1TENTH 1/10 scale research | Donkey Car DIY beginner RC | Racecar NEO 1/10 competition | This Platform Custom Ackermann |

|---|---|---|---|---|

| Price | ~$3,000 – $4,500+ | ~$250 – $400 | ~$800 – $1,800 | ~$500 |

| Primary Sensor | 2D LiDAR (Hokuyo UST-10LX) | Single monocular camera | Color cam + TOF depth cam + YDLIDAR | Stereo camera + IMU (full VIO) |

| Localization | LiDAR SLAM + particle filter | None (deep learning); GPS waypoint tracking available | Basic odometry or simulation | Stereo VIO + RTAB-Map 3D SLAM |

| Mapping | 2D occupancy grid | None | None in standard curriculum | Full 3D dense mapping |

| Depth Estimation | No (LiDAR only); optional RealSense D435i | None (default); optional RealSense D435 | Arducam TOF depth camera (480×640) | Stereo depth + 3D reprojection |

| IMU Fusion | Basic (VESC IMU + EKF) | MPU6050/9250 supported; no fusion algorithm | Adafruit IMU present; no fusion in curriculum | Full camera-IMU calibration (Kalibr) + EKF sensor fusion |

| Racing Algorithms | Gap Follow, Pure Pursuit, MPC, RRT | Behavioral cloning + GPS path-follow + CV line-follow | Wall follow, obstacle avoidance, line follow | Gap Follow, Pure Pursuit, MPC + behavioral cloning |

| Behavioral Cloning | Supported via research repos (mlab-upenn/f1tenth_il) | TF/Keras + Fastai | Not typical in curriculum | Production imitation learning on real hardware |

| Real-time Engineering | Partial (competition builds use real-time particle filter) | No real-time concerns | Python abstraction layer; real-time not a focus | Real-time ROS 2, timestamp correction, hardware-aware coding |

| Camera Calibration | Basic (standard ROS tools when camera added) | None | None | Full stereo + camera-IMU calibration with Kalibr |

| ROS Version | ROS 2 (Foxy) | No ROS | ROS 2 (Ubuntu 22.04) | ROS 2 (production standard) |

| Scale and Speed | 1/10 scale, ~30 mph | 1/16 scale (default), slow | 1/10 scale, ~20 mph | 1/12 scale, very high speed |

| Industry Readiness | High (but inaccessible) | Low | Low–Medium | High — same stack used in industry |

Prices are approximate and vary by region and configuration.

Get In Touch

Contact

Interested in collaboration, research, or just talking robotics? Reach out directly.